OK, buckle up. This is going to be a long one, even by my standards…

During the last PDC Microsoft announced a next innovation: Async C#, currently available as CTP. There is also a respective white paper; I recommend reading it, if you need an introduction.

Just for the record, here’s what we are talking about:

This roughly translates to:

As you can see, the compiler mainly translates an await into a new task and the remainder of the method into a continuation for that task.

To tell the truth, the actual generated code looks quite different; this is semantically equal, though.

Simple enough, but that’s only a simple case. Actually there’s more:

- If we are talking about a UI thread, the runtime ensures that the continuation runs on the UI thread as well. The task version would need to marshal the call to the UI thread explicitly, e.g. by calling Dispatcher.BeginInvoke.

- If the wait is contained within a loop of some kind, the compiler also handles that, cutting the loop into asynchronous bits and pieces. Similarly for conditional calls.

That’s a nice set of features and there is no doubt that this approach works (there is a CTP after all). And reception within the community so far has been very positive.

However!

If this is really such a compelling feature, it should be quite easy to present a bunch of examples in which it pays off. Instead, what I found was a bunch of examples that made me wonder. Therefore I decided to put it to some…

Litmus tests…

To test whether async/await actually merits the praise, I set out to recheck and question the examples provided by various Microsoft people:

- Soma Segar’s introduction (actually taken from the white paper mentioned above)

- Netflix (used by Anders Hejlsberg during his PDC talk and available as sample within the CTP)

- Eric Lippert’s discussion

Note: Examples have a tendency to oversimplify. I chose this set to overcome that effect: Soma provides a simple introduction, Netflix is more “real world”, and Eric looks at it form a language designer point of view. Three different samples, three different people, three different perspectives.

Note 2: The code I present is from my test solution (download link at the end), thus it is not exactly a citation, rather it’s complemented with my bookkeeping code. I also didn’t address every question that may arise (such as exception handling) explicitly. This post got long enough as it is.

Soma Segar’s blog

Let’s begin with Soma’s introduction, as it is the most simple one:

He provides a „conventional“ asynchronous solution, that looks… quite convoluted, actually. Note that I prepended the necessary code to synchronize the call.

And his respective solution using the async/await looks quite nice in comparison:

Easier to write, and easier to understand, no question about that.

However: Looking at the awfully convoluted task based version, I asked myself: „Who would write such code in the first place?“.

The use case is to load some web pages and sum up their size. And to do this asynchronously, probably to avoid freezing the main thread. And the solution – awful and async/await alike – insists on getting the value for one web page asynchronously, but coming back to the UI thread to update the sum. Then starting the next fetch asynchronously, and again come back to the UI thread to update the sum.

But then, why not put the whole loop in a separate thread? The only caveat would be the marshaling of UI calls (i.e. a call to Dispatcher.BeginInvoke, which I left out here in favor of simple tracing, but it’s addressed in the next example). Somewhat like this:

This is very close to the await/async version, nearly equally easy to write, and certainly as easy to understand. And it did not need a language addition, merely a little thinking about what it is that shall actually be accomplished.

OK, one example that didn’t really convince me.

Netflix (sample in the CTP)

The Netflix sample is part of the CTP and was also used by Anders Hejlsberg during his PDC talk. The sample solution comes with synchronous version, conventional asynchronous version, and new async/await version.

The synchronous version looks straight forward, with 43 of relatively harmless LOC for the relevant two methods (LoadMovies and QueryMovies):

The conventional asynchronous version – do I even have to mention it? – is in comparison an ugly little piece of code. For the sake of completeness:

Twice as much code (85 LOC), a mixture of event handler registrations with nested lambdas and invokes. It takes considerable time to fully understand it. No one in his right mind would want to have to write this.

The async/await version – again – turns the synchronous version into a similarly straight forward looking asynchronous version:

Again the initial verdict: The async/await version looks nice and clean compared to the ugly task based version, and very similar to the synchronous one. Hail to async/await.

But again, looking at the ugly asynchronous version, I asked myself, “Who would actually write such code? And why? Masochistic tendencies?”

Let’s take a step back and look at the use case: Call a web service asynchronously to avoid freezing the UI (as the synchronous version does), update the UI with the result, and call the web service again if there is more information available.

The task based version tries to manually dismantle this loop into a sequence of asynchronous call followed by callback on the main thread to update the UI, followed again by the next asynchronous call. Add cancelation and exception handling and it is surprising that it isn’t even more ugly than it already is.

But! Why even try to dismantle the loop? Why not put the whole loop in a separate thread? Yes, that would solve the use case, and yes, it would be just as clean as the synchronous version. The only caveat is that each interaction with the UI has to be marshaled into the main thread, but I can live with that. Here it is:

I left the QueryMovies Method out for brevity, as it is similar to the synchronous version above.

Again this is very close to the await/async version, again nearly equally easy to write, and – again – certainly as easy to understand. And – again (pardon the repetition) – it did not need a language addition. Merely – ag… ok, ok – a little thinking about what it is that shall actually be accomplished.

Another example that didn’t convince me. But two is still coincidence.

Eric Lippert‘s discussion

Eric has a slightly different use case: For a list of web addresses, fetch the web page and archive it. There should be only one fetch at a time, and only one archive operation, but fetch and archive may overlap (described here). This is more about overlapping different operations, rather than avoid freezing the UI, as in the previous examples.

The synchronous version is this simple:

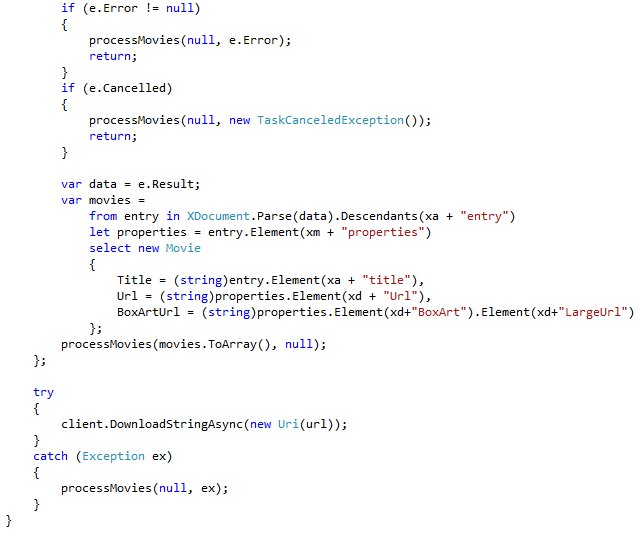

He constructed the asynchronous version (not even mentioning async/await) as state machine, described in the post I just mentioned. I’ll spare you the reiteration this time; follow the link if you have doubts that it is far more convoluted than the simple loop above. But of course, async/await comes to the rescue:

Nice and simple. But can you deduce the logic behind this? I have to admit it required some mind twists on my part. Now, to make it short, here is the task version:

Not quite as simple as the async/await version, but if you look closely, it’s actually a very close translation into continuations. And… equally inefficient. You may have noticed the comments I put in there, stating that there is some unnecessary synchronization between a fetch and an earlier archive operation.

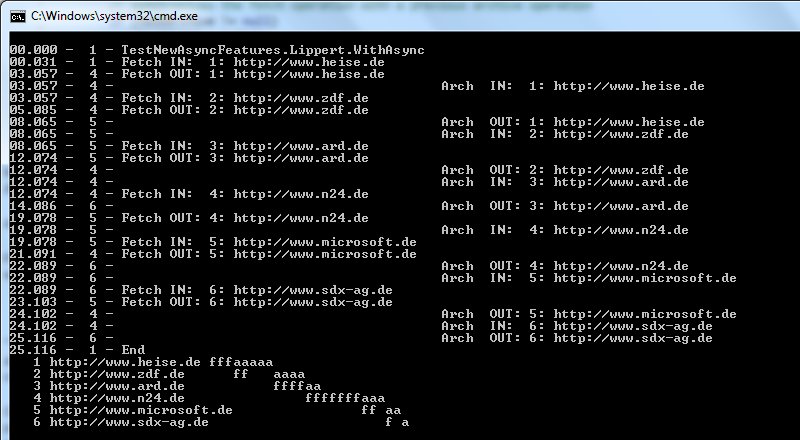

So, this time I actually went a little bit further: This is why I complemented a little test data, bookkeeping, and a nice little printout to visualize the sequence. Here’s what the async/await version (and similarly the task version) produces:

“‘f” stands for fetch, “a” for archive. The lower part shows the operations according to their start and end time. You can depict nicely how tasks follow other tasks, and how fetch and archive run in parallel. No fetch overlaps another, no archive overlaps another archive operation. Well. Have a look at line 3. See how the fetch starts after the archive in line 1 was finished? It could have started earlier, couldn’t it? Same in line 6. That’s the unnecessary synchronization I mentioned above.

A little working on the task version solves that by chaining the fetches and archives onto their respective tasks:

However, now I becomes a little more complex and hard to understand.

Going back to the drawing-board. What again is the use case? It’s actually a pipeline, with multiple stages (two in this case), each stage throttled to process only a certain amount of input values (one in this case). The cookbook solution is to put a queue in front of each stage, and have separate workers (again, in our case just one) take input from this queue, process it, and place the result in the next stages queue. Like this:

Two queues of lambdas, two tasks processing these queues, the first lambda placing new entries into the second queue upon completion. If you ask me, this is way closer to the proposed use case than either the task based versions or the async/await version. And the logic behind it – intention as well as control flow –, which in this case is a little more complicated than in the previous examples, is also far easier to comprehend. And the best part: Look at the output:

Fetch and archive start independently of each other, making the solution more effective than the async/await version. Why? Because I actually thought about the problem and the solution, rather than simply throwing asynchronicity on the code.

If anything, this example raises even more concerns than the other ones.

Verdict

Three different samples. Three times presenting some conventional task based asynchronous code – code that is rather convoluted, ugly, and hard to understand. Three times a nice async/await version that clearly makes the code nicer.

But also three times it “only” took a little thinking to come up with a far nicer task based version than the proposed convolution. Two times very close to the async/await version. The third time actually more efficient than that.

One could actually get the impression that the convoluted task based code has been deliberately made ugly in order to provide a better motivation for the new features. I wouldn’t go that far, but still, it raises some questions, as to how these examples came into being. Perhaps a back-port of what the compiler produces for async/await?

Granted, each example I examined may have its own compromises which slightly invalidates it (as is often the case with examples); had I only found one, I wouldn’t have bothered writing this post. But: One is an incident, two is coincidence, three is a trend. And I did not stumble over one example yielding a different result.

So, to summarize my concerns:

- I showed that the value of async/await is less compelling than presented. This does raise the question, whether it is still compelling enough to merit a language extension.

I judge that value by its ability to making code shorter, easier to write, easier to comprehend. Or to quote Eric Lippert:

“Features have to be so compelling that they are worth the enormous dollar costs of designing, implementing, testing, documenting and shipping the feature. They have to be worth the cost of complicating the language and making it more difficult to design other features in the future.” http://blogs.msdn.com/b/ericlippert/archive/2008/10/08/the-future-of-c-part-one.aspx

- The way I verified my concerns was actually to take one step back and think about the demand. I hate to think that async/await actually encourages developers to think less and in turn write less than optimal code – especially the less experienced developers who’ll have a hard time understanding the implications of those keywords in the first place.

To make that clear: I did not show anything that suggests that the async/await language feature is per se wrong. Nor did I prove that there is no use case that does not suffer from my concerns (only that Microsoft failed to present them yet – or I failed to find them).

Conclusion

Here my conclusion: I could live with concern #1, but #2 is something that gives me the creeps. There’s two things I would like Microsoft to do to make the feature work (or drop it altogether):

- Make more obvious what the code actually does (e.g. see the comments here for some naming suggestions – although my concern goes beyond simple naming issues).

- Rethink the task based samples you are basing your reasoning on. We don’t need a feature that makes convoluted code simpler; we need features that make code simple, we would write in the first place.

BTW: Whether async/await is backed into the language or not, this endeavor showed one thing quite clearly: Asynchronicity is important, and becoming even more so. And Microsoft certainly did accomplish something great with the TPL, on which all these discussions built without even mentioning it anymore. And as Reed pointed out, Tasks play a major role in the CTP as well.

Finally: Here is the download link for those who’d like to recheck my findings.

Merry Christmas!

That’s all for now folks,

AJ.NET

Try rewriting your versions for a platform that doesn’t support synchronous I/O like Silverlight. When you cannot call WebClient.DownloadString, things get messy really fast.

Comment by grauenwolf — January 3, 2011 @ 9:00 pm

I think this article misses the point in quite a spectacular way. In at least 2 of your examples, you mandate swapping out async ops and replacing them with their sync counterparts and running them on a separate thread. This does of course emulate async behaviour and is absolutely fine for client side apps that are not particularly resource heavy. However, in the case where you’re dealing with many operations (think servers, bulk downloaders etc), simply putting synchronous code out on its own thread is a huge waste of resources with large numbers of threads blocked for the large part of their lifetime. The new async extensions shine in this regard, allowing threads to be returned to the pool to be reused while IO operations complete rather than blocking. This means you can (for instance) deal with thousands of connected clients with a very small number of threads, rather than needing to spin up new threads simply to work around the fact that synchronous methods don’t quite cut it. By cherry picking a few toy examples which never really needed async IO in the first place, your article provides a somewhat misleading analysis into the benefits of the new async features.

Comment by spender — October 24, 2011 @ 4:21 pm

@spender: Thanks for the feedback.

Anyway, I think your reasoning is off the mark.

Firstly, I’m using the TPL, not some dedicated separate thread. This is the same stuff the new async features use under the hood (which in turn uses the threadpool). Therefore there is no difference with regard to how those requests are processed.

And your second point actually (kind of) hits bulls eye: I was not “cherry picking a few toy examples”, I was evaluating the whole bunch of examples that Microsoft used to sell the async feature (then).

Also – and I may have failed to make this clear enough – I did not criticise the feature itself. I criticised the way Microsoft tried to _sell_ this feature. (As I wrote: “I did not show anything that suggests that the async/await language feature is per se wrong.”)

And by the way: I’m still convinced that Microsoft did a poor job selling this feature when I wrote this post. But if you care to take a look at what they presented with Windows 8 WinRT, the actually do have a great story arround async. Have a look hat http://channel9.msdn.com/events/BUILD/BUILD2011?sort=sequential&direction=desc&term=&t=async .

Regards,

Comment by ajdotnet — October 25, 2011 @ 2:19 pm

[…] C# 5.0 Asynchrony – A Simple Intro and A Quick Look at async/await Concepts in the Async CTP · Visual Studio Async CTP – An Analysis · Async Series by Peter […]

Pingback by PDC10: Future Directions for C#, Visual Basic, and F# – Lisa Cohen — December 4, 2018 @ 9:54 am